Non-contact sexual offences: we need to recognise their harms

The Grok scandal reminded us that no contact doesn’t mean no harm

Back in January, like many other women, I was horrified to learn that Grok, (X’s AI ‘assistant’) had generated 3 million sexualised images in an 11-day period. With the Center for Countering Digital Hate calculating that this meant Grok was generating these at a rate of 190 per minute, the scale and prevalence of the issue of sexualised deepfake creation was then made strikingly apparent. On a personal level, as a young woman and journalist with an online presence I felt a new kind of fear, the fear that this could happen to me too. It was this feeling that got me thinking about how, whilst we now know the volume of these images, shouldn’t we also be focussing on knowing the impacts they have on victims?

Of course misogynists didn’t share my concern, with some online rhetoric in the Grok-aftermath dismissing what it had facilitated - and the actions of those who had used it - as not ‘real’ and thus harmless. Though I was disappointed at such a disregard for victims, I was not surprised; in fact, as deepfakes constitute a kind of non-contact sexual offence (NCSO), I had partly expected this. After all, a historic lack of understanding of the severity of NCSOs is in part what allowed Wayne Couzens to continue as a police officer, despite previous reports of indecent exposure involving him, a position he would later abuse to rape and murder Sarah Everard in 2021.

Though we have made progress in the UK in taking these offences more seriously, with the Angiolini Inquiry Part 2 showing some efforts made by police and government to better prevent these crimes, the Grok scandal highlights that there is still a limited understanding of how NCSOs affect victims. To find out more about these impacts, I spoke to Fiona Vera-Gray, a Professor of sexual violence who helped to develop new police guidance and training on this category of crime.

Fiona explains that historic patterns of thinking like “as long as you weren’t touched [...] then it’s not that serious”, means “we don’t see them [NCSOs] as harmful, because we still look at harm through the lens of: is there physical harm? Or, is there psychological harm?”

Specifically, Fiona says we don’t think about “freedom-based harms”. She clarifies that recent research finds that for women these offences have “significant harms in terms of restricting their freedom, feeling like they can’t go into parks, into nighttime spaces, or go walking alone”.

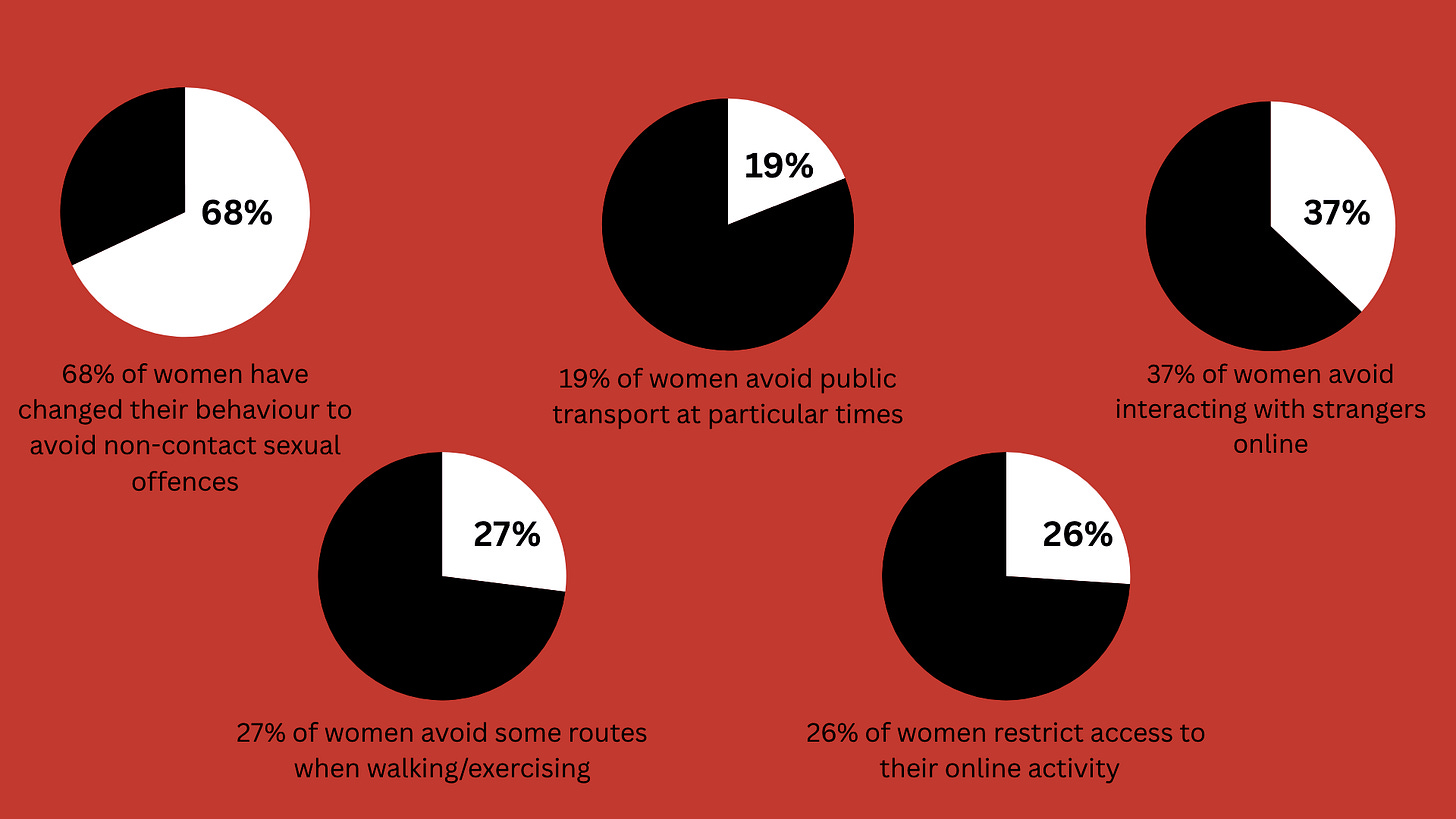

The research she is referring to is actually her own - co-authored by Professor Clare McGlynn, Jo Lovett and Dr Vicky Butterby - published in February. Freedom under threat reveals that considerable percentages of women are changing their behaviour both online and offline, with some of the results visualised below.

In our conversation, Fiona also discussed the rising frequency of digital NCSOs, citing the report’s finding that women under 24 are more likely to have experienced cyberflashing from the ages of 18 to 24 than women over 60 are likely to have experienced indecent exposure in their entire lives. With the prevalence of digital offences like cyberflashing, Fiona says that this is significantly impacting young women’s ability “to participate in online life”.

“Increasingly the whole world is online. Your job prospects are online. You need to be making a name for yourself online. And if what we’re having is young women feeling like they need to pull themselves back from the online space, that’s going to have quite substantial impacts in their later life in terms of job opportunities, life opportunities, and just general opportunities to participate in civil society.”

Lucy Wynne, a secondary school teacher from Buckinghamshire, similarly shared her concerns about the impacts of deepfakes, saying “as a teacher and as a parent, and as a generally concerned citizen, I do worry about how deepfakes could potentially impact young girls, and young women both in school and out of school. I think that with the easy availability of really excellent quality AI it’s likely that this is only going to get worse”.

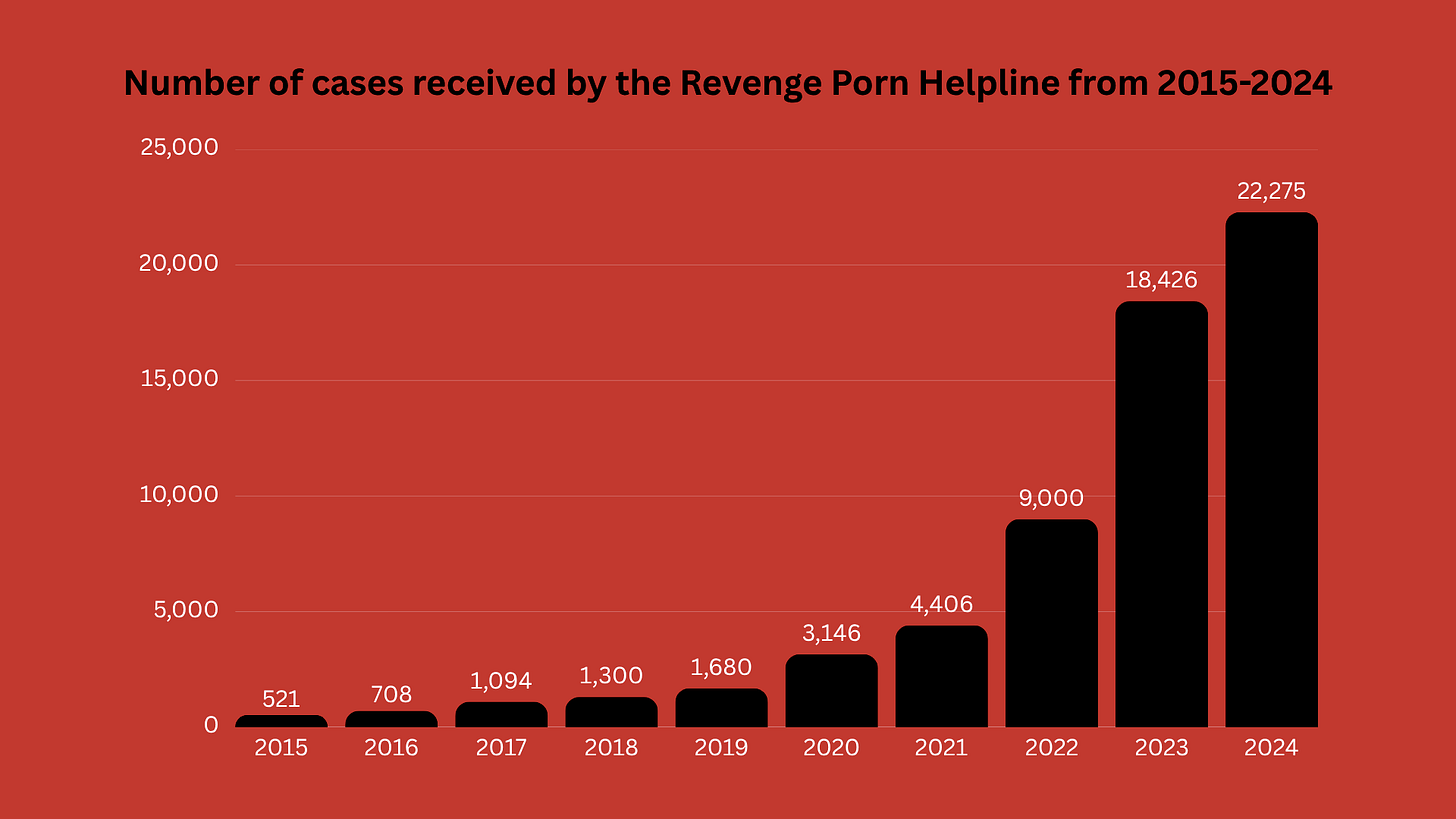

Already, we see an increase in ‘revenge porn’ incidents in the last decade, shown in the number of cases handled by the Revenge Porn Helpline, shown below:

Whilst AI generated sexualised deepfakes on this scale are a relatively recent phenomenon, we see that image-based abuse is not new. In fact, the delayed recognition of how technology can threaten women’s safety is reflected in UK legislative history, detailed in the video below:

Fiona highlighted that one of the things her fellow advocate - and Glamour ‘stop image based abuse’ campaigner - Professor Clare McGlynn argues is that “we need to start future-proofing our law”.

She clarifies that Clare has been advocating for “an overarching law” which covers all kinds of image-based abuse, rather than individually legislating for cyberflashing, deepfake porn or revenge porn - but that hasn’t happened yet.

Though legislative future-proofing plays a role in preventing future NCSO, Fiona emphasises that improving cultural understanding is even more important:

“The biggest thing I think that would make a real difference is for the recognition of harm to really be understood.”

“What we still don’t understand is harms that aren’t necessarily psychological harms. They aren’t physical harms, but they’re harms to you, your freedom, they’re harms to your ability to take up your right to participation, your freedom of movement, your bodily autonomy, those things we still as a society haven’t quite got our heads around.”

“They constrain the space of women and girls, the online space, but also the offline space. And once we understand that as an injury, as a harm, as serious in and of itself, I think that will then start to create the cultural change and then the change within the criminal justice system that we need for these kinds of offences.”

Ultimately, with the recent prevalence of digital sexual offences and the harms we know NCSO causes its victims, the overhaul of attitudes which have previously minimised these offences is more vital now than ever.