The Moderation Paradox: why social media users want both free speech and censorship

As proposals for tighter social media restrictions are raised in parliament, should we expect a corresponding rise in censorship by the platforms themselves?

A proposal to ban social media for children and young people was defeated in parliament on 9th March 2026; though whether this is a plausible or even a definitive conclusion remains raised.

Dr Sejal Parmar Setty, a professor at the University of Surrey, claims a blanket ban would treat young people as a ‘single homogeneous group, ignoring the diversity of their experiences, needs and circumstances’.

Earlier this year, the Foreign Affairs Committee described social media as a ‘nefarious actor’ in British democracy; warning that, ‘time and again, we’ve been told that social media platforms are being weaponised to sow division and undermine trust’.

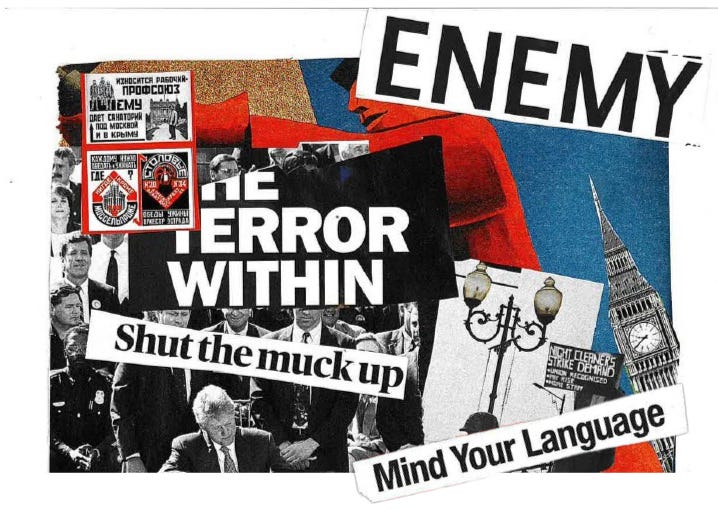

In both cases the warnings were diffused under the guise of freedom of speech and issues surrounding whether individuals will seek similar content elsewhere, potentially consuming more toxic content. Within these contradictions a paradox arises: how do we balance free speech and censorship?

The paradox of balancing free speech and censorship is highlighted through the resurgence of Commons committees. Photograph: Adobe Stock

Friederike Quint, a research associate and doctoral candidate at Technical University of Munich, who works in the “Transparency in Content Moderation” project. Highlights that, ‘In many democracies—including the UK—freedom of expression is widely seen as a core societal principle, and people tend to support it strongly when asked about it in the abstract.’

This was demonstrated by Sir John Whittingdale MP statement on 9 March 2026, at a media freedom, Foreign Affairs Committee: ‘Over the course of the inquiry, we have heard from Governments that disinformation currently represents one of the greatest threats to democracies.’

Quint argues that, ‘When the governments are confronted with concrete scenarios such as threats, harassment, or hateful speech, the way they weigh the importance of upholding or protecting this value often changes.’

Despite the arguments for censorship, Quint stresses that the amount of exposure to toxic language online is unavoidable stating, ’many users don’t necessarily condone toxic speech, but they have somewhat adapted to it or even become resigned towards it’.

Quint argues that this environment ‘creates a somewhat paradoxical dynamic’. On the one hand, the normalisation of toxicity on the other, whether platforms are actually able- or willing- to enforce their rules effectively.

Jillian York is a Director for International Freedom of Expression at the Electronic Frontier Foundation (EFF), an advocacy organisation focusing on the policies and regulations of different issues relating to free expression, privacy, innovation.

Jillian York, Director for International Freedom of Expression at the EFF. Photograph: EFF Staff Profile

When asked of the risks that online speech moderation from the government may hold she says, ‘Any regulations have to be necessary. They have to be proportionate and they have to have safeguards…’

‘In the UK right now, what we’re seeing with this latest push is that they’re trying to take the minimal safeguards that exist through Ofcom, they’re already trying to pull that back and give more power to elected officials, which is really troubling. And we’ve seen how troubling that can be from other countries.’

At the same time, the opacity of platform moderation adds another layer of concern. ‘We don’t have visibility into how these systems work,” York says. ‘We don’t know what we’re not seeing’. Over the years, she adds, this has led to ‘a lot of accidental censorship’.

Responsibility, then, is diffuse. Within parliament, the role of the censor appears to fall upon the social media companies themselves.

Edward Morello MP, during a foreign affairs committee declared, ‘The underlying issue with disinformation and misinformation… to the public, social media platforms feel like cesspools of misinformation and disinformation.

‘The inherent problem is that you are an engagement-driven business, which means eyeballs and getting people to engage with and share content, so how do you offset that?’.

According to Taylor Annabell, a platform governance and digital culture researcher and Lecturer at Cardiff University, ‘it would be unreasonable and unscalable to moderate all content.’

Annabell notes a persistent confusion in public debate, ‘the discursive slippage between censorship and moderation. The DSA has been referred to as a “tool for censorship” when it does not moderate lawful content’.

If there is a consensus, for social media censorship, it is that no single actor can resolve the tension alone. Quint’s research suggests that citizens increasingly see moderation as a shared responsibility between governments, platforms and users themselves.

For York, that responsibility must be grounded in stronger safeguards and in the kind of systemic change long advocated by the EFF. ‘For me, it’s really important that we work to change those things by ensuring that companies are transparent, making sure that they’re paying a living wage and giving appropriate benefits to their staff…’ she says.

‘But also making sure that when it comes to automation, that there is transparency around that, what it looks like, how it’s being used, and making sure that there is a human involved in at least the appeal decision’.

The EFF’s work has consistently focused on these principles, holding accountability for how platforms moderate content. Their approach reflects a broader argument running through this debate that, not just whether to moderate but how to do so transparently and with meaningful human oversight.

Social media users want the freedom to speak and the protection from harm rendering the paradox unlikely to disappear. Instead, bridging the divide may mean building more systems as the EFF argues.